Building and Operating a Pretty Big Storage System Called S3

I’ve worked in computer systems software – operating systems, virtualization, storage, networks, and security – for my entire career, and the last six years working with Amazon Simple Storage Service (S3) have forced me to think about systems in broader terms than I ever have before.

My role in S3 touches on everything from hard disk mechanics at the very foundation to customer-facing performance experience and API design. I want to share my sense of wonder at the storage systems that are all collectively being built at this point in time, because they are pretty amazing. I’ll cover a few of the interesting nuances of building something like S3, and the lessons learned and sometimes surprising observations from my time in S3.

17 years ago, on a university campus far, far away…

S3 launched on March 14th, 2006, which means it turned 17 this year.

At that time I was finishing my PhD, in the computer lab that had built the Xen hypervisor in Cambridge, UK. After my PhD, myself and a few colleagues founded a company, XenSource, to build Xen into enterprise software for virtualization. In hindsight, that startup was a bit of an opportunity missed, because while we focused on selling our technology as an on-premise product to corporate IT administrators, Amazon was a customer and was using these exact same technologies to build the early foundations of cloud computing. XenSource was acquired by Citrix, and I transitioned to academia, taking a faculty position at UBC in Vancouver. I grew my research lab to about 18 graduate students, which was absolutely exhausting, but the research community was invaluable. I then co-founded Coho Data, a storage company that grew to about 150 employees across four countries before the company wound down. Afterward, facing an empty office and depleted motivation to rebuild my research lab, I recognized that working in cloud computing required firsthand experience, leading me to interview with cloud providers and ultimately join Amazon in 2017.

How S3 works

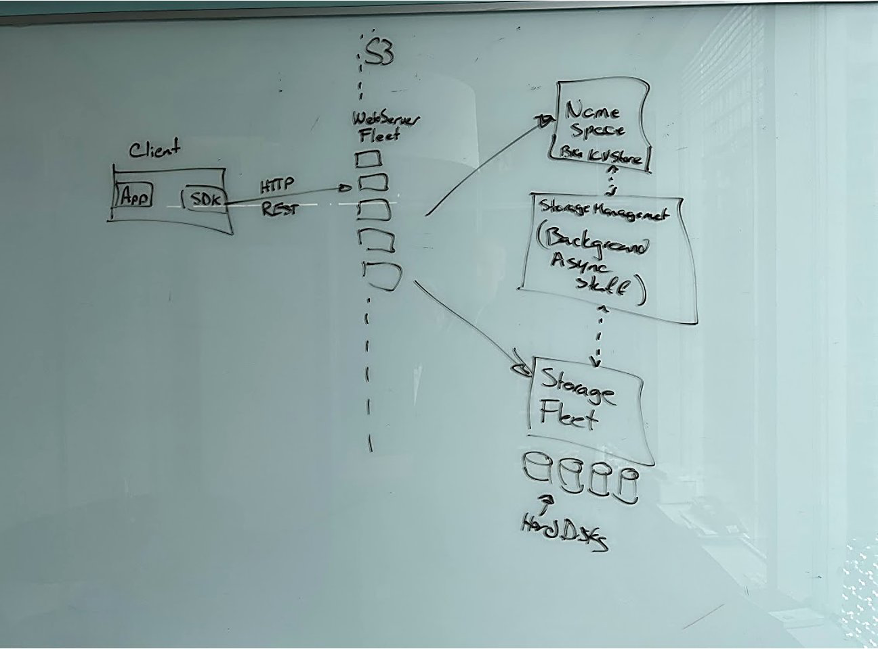

When I joined Amazon in 2017, I arranged to spend most of my first day at work with Seth Markle, an engineer who had been with S3 since the very early days. Seth patiently spent about six hours with me at a whiteboard, walking me through the architecture of the system. The fundamental design includes a frontend REST API fleet, a namespace service, a storage fleet of hard disks, and a background operations fleet handling replication and tiering tasks.

Significantly, AWS “ships its org chart” – each architectural component corresponds to organizational teams with their own leadership. S3 today comprises hundreds of microservices, with interactions governed by API-level contracts resembling independent businesses. This modular structure sometimes creates inefficiencies requiring substantial remedial work, but this mirrors standard software development challenges applied to team-level organization.

Two early observations

Before Amazon, I’d worked on research software, I’d worked on pretty widely adopted open-source software, and I’d worked on enterprise software and hardware appliances that were used in production inside some really large businesses. My first key observation was that I was going to have to change, and really broaden how I thought about software systems. In contrast to my prior experience of designing, building, testing, and shipping discrete software products, S3 functions as a living, breathing organism where developers, technicians, and customers all participate in one continuously evolving system. Customers purchase services expecting consistent excellence rather than static software.

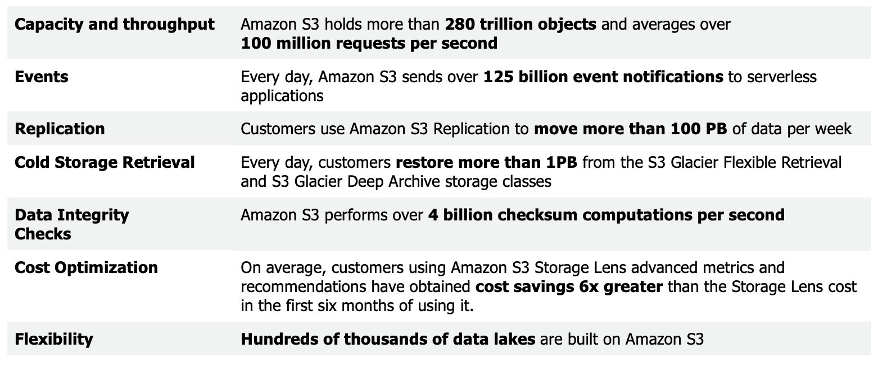

The second observation acknowledged that despite the whiteboard diagram’s utility in sketching organizational structure, it was also wildly misleading, because it completely obscured the scale of the system. Understanding S3’s true scale required years of engagement, with surprises about scale consequences continuing to emerge.

Technical Scale: Scale and the physics of storage

It probably isn’t very surprising for me to mention that S3 is a really big system, and it is built using a LOT of hard disks.

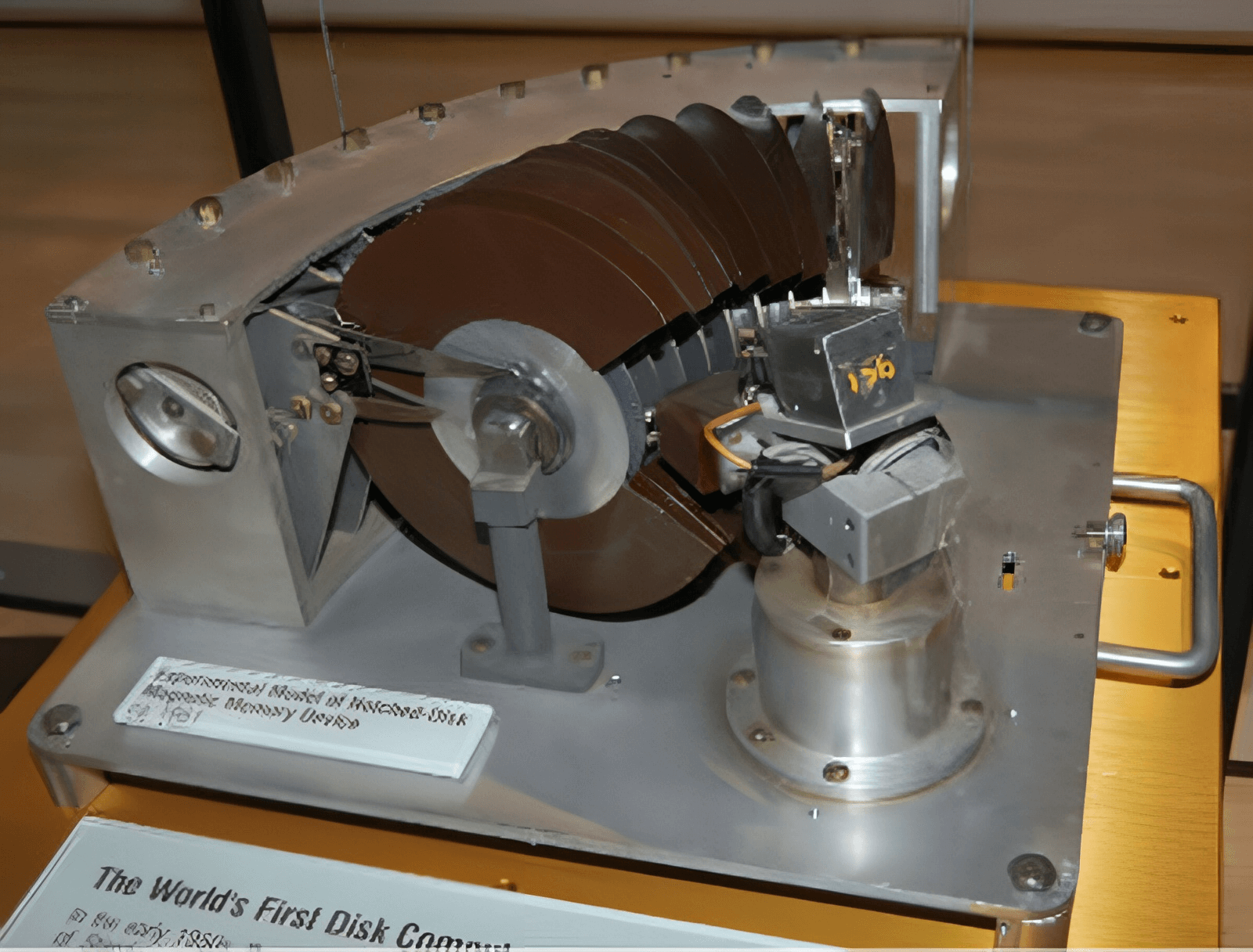

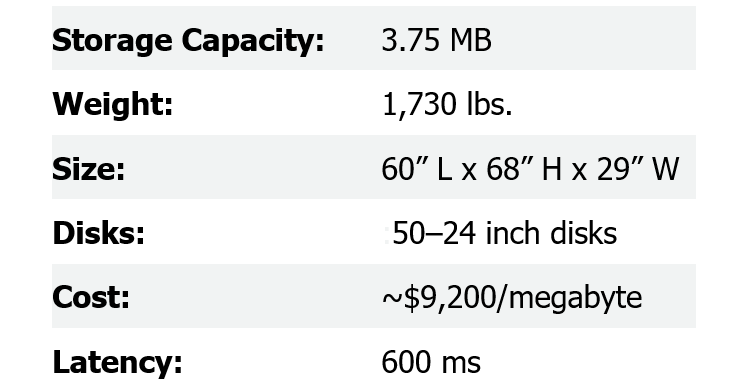

Hard disk technology has evolved dramatically since the IBM RAMAC in 1956. Capacity has improved 7.2 million times while physical size decreased 5,000-fold, yet random access performance improved only 150-fold due to mechanical limitations.

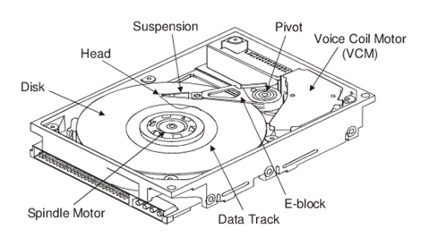

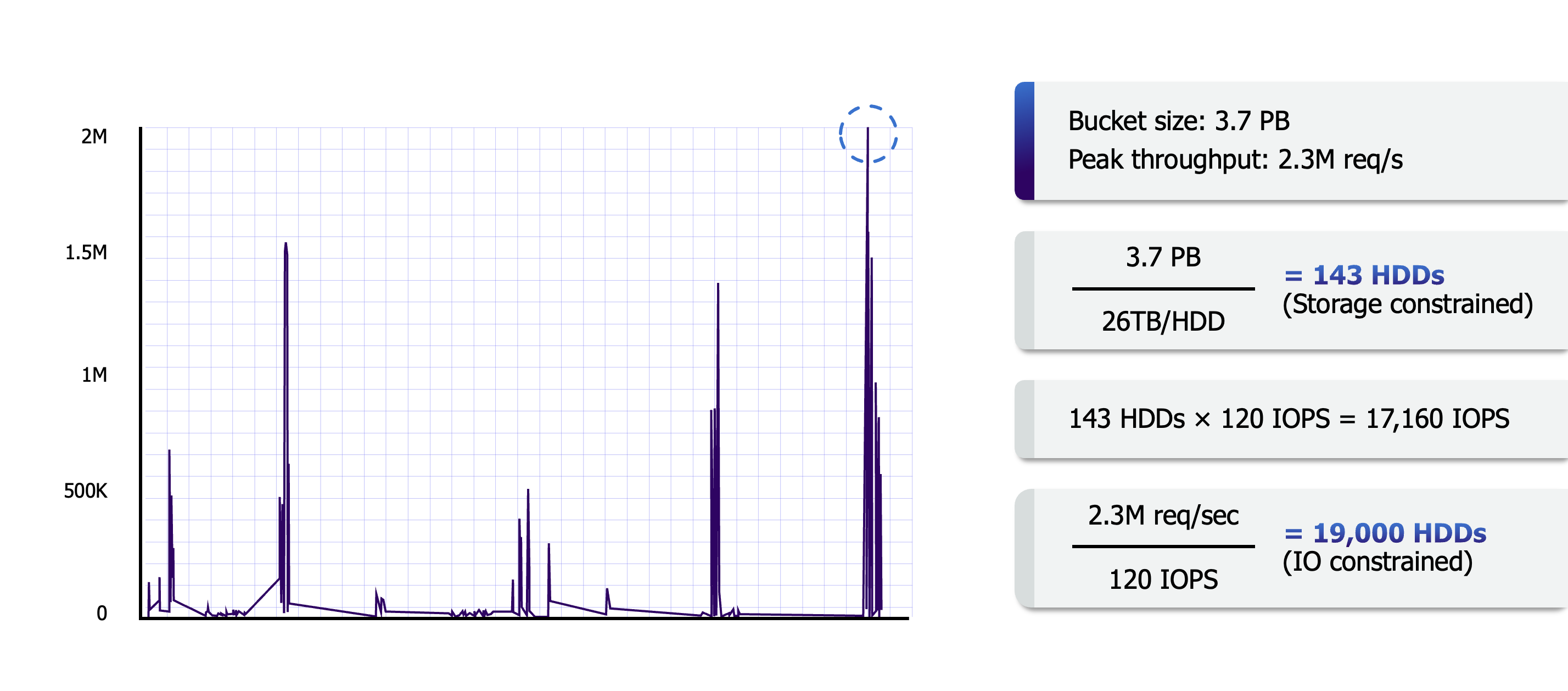

If you are doing random reads and writes to a drive as fast as you possibly can, you can expect about 120 operations per second. This unchanged performance metric shapes S3’s entire design philosophy.

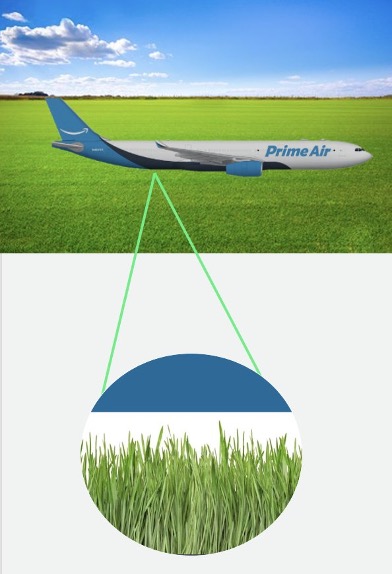

Imagine a hard drive head as a 747 flying over a grassy field at 75 miles per hour, with only two sheets of paper between the plane and grass. This demonstrates the extraordinary mechanical precision required. As drives grow to 26TB today with roadmaps pointing toward 200TB, the tension between growing capacity and flat performance becomes crucial to S3’s architectural decisions. The system must strategically scale by adopting the largest available drives to manage the ratio of storage to input/output operations.

Managing heat: data placement and performance

So, with all this in mind, one of the biggest and most interesting technical scale problems that I’ve encountered is in managing and balancing I/O demand across a really large set of hard drives.

In S3 terminology, “heat” refers to the concentration of requests hitting particular disks. The challenge involves distributing data placement to prevent hotspots – situations where certain drives become overloaded and create queuing delays that ripple through the storage stack.

A critical insight emerges from S3’s massive scale: when millions of independent workloads aggregate on the system, individual request patterns become decorrelated from one another. While individual workloads tend to be bursty and unpredictable, their combination smooths into a more predictable aggregate demand pattern. Once aggregation reaches sufficient scale, it is difficult or impossible for any given workload to really influence the aggregate peak at all. This characteristic fundamentally enables S3 to balance load evenly across its disk fleet in ways that would be impossible for smaller systems.

Replication: data placement and durability

In storage systems, redundancy schemes are commonly used to protect data from hardware failures, but redundancy also helps manage heat. Simple replication – storing multiple copies across different disks – offers an expensive capacity overhead but provides flexibility by allowing reads from any replica, effectively balancing request traffic.

To reduce this capacity cost, S3 employs erasure coding techniques like Reed-Solomon encoding. This approach divides objects into k identity shards plus m additional parity shards. As long as k of the total (k+m) shards remain available, the object can be reconstructed. This strategy maintains comparable failure protection while consuming less storage space than pure replication.

The impact of scale on data placement strategy

So, redundancy schemes let us divide our data into more pieces than we need to read in order to access it, and that in turn provides us with the flexibility to avoid sending requests to overloaded disks, but there’s more we can do to avoid heat.

S3 deliberately spreads objects within each customer’s bucket across millions of individual disks rather than concentrating them. This distribution provides two major benefits: customer data occupies only a tiny fraction of any single disk, preventing individual workloads from creating hotspots, and it allows individual workloads to burst to a level of performance that just wouldn’t be practical in standalone systems. A genomics customer running parallel analysis across thousands of Lambda functions can distribute across over a million drives simultaneously.

The human factors

Beyond the technology itself, there are human factors that make S3 - or any complex system - what it is. One of the core tenets at Amazon is that we want engineers and teams to fail fast, and safely. We want them to always have the confidence to move quickly as builders, while still remaining completely obsessed with delivering highly durable storage. One strategy we use to help with this in S3 is a process called “durability reviews.” It’s a human mechanism that’s not in the statistical 11 9s model, but it’s every bit as important.

When an engineer makes changes that can result in a change to our durability posture, we do a durability review. The process borrows an idea from security research: the threat model. The goal is to provide a summary of the change, a comprehensive list of threats, then describe how the change is resilient to those threats. In security, writing down a threat model encourages you to think like an adversary and imagine all the nasty things that they might try to do to your system. In a durability review, we encourage the same “what are all the things that might go wrong” thinking, and really encourage engineers to be creatively critical of their own code. The process does two things very well:

- It encourages authors and reviewers to really think critically about the risks we should be protecting against.

- It separates risk from countermeasures, and lets us have separate discussions about the two sides.

When working through durability reviews we take the durability threat model, and then we evaluate whether we have the right countermeasures and protections in place. When we are identifying those protections, we really focus on identifying coarse-grained “guardrails”. These are simple mechanisms that protect you from a large class of risks. Rather than nitpicking through each risk and identifying individual mitigations, we like simple and broad strategies that protect against a lot of stuff.

Another example of a broad strategy is demonstrated in a project we kicked off a few years back to rewrite the bottom-most layer of S3’s storage stack – the part that manages the data on each individual disk. The new storage layer is called ShardStore, and when we decided to rebuild that layer from scratch, one guardrail we put in place was to adopt a really exciting set of techniques called “lightweight formal verification”. Our team decided to shift the implementation to Rust in order to get type safety and structured language support to help identify bugs sooner, and even wrote libraries that extend that type safety to apply to on-disk structures. From a verification perspective, we built a simplified model of ShardStore’s logic, (also in Rust), and checked into the same repository alongside the real production ShardStore implementation. This model dropped all the complexity of the actual on-disk storage layers and hard drives, and instead acted as a compact but executable specification. It wound up being about 1% of the size of the real system, but allowed us to perform testing at a level that would have been completely impractical to do against a hard drive with 120 available IOPS. We even managed to publish a paper about this work at SOSP.

From here, we’ve been able to build tools and use existing techniques, like property-based testing, to generate test cases that verify that the behaviour of the implementation matches that of the specification. The really cool bit of this work wasn’t anything to do with either designing ShardStore or using formal verification tricks. It was that we managed to kind of “industrialize” verification, taking really cool, but kind of research-y techniques for program correctness, and get them into code where normal engineers who don’t have PhDs in formal verification can contribute to maintaining the specification, and that we could continue to apply our tools with every single commit to the software. Using verification as a guardrail has given the team confidence to develop faster, and it has endured even as new engineers joined the team.

Durability reviews and lightweight formal verification are two examples of how we take a really human, and organizational view of scale in S3. The lightweight formal verification tools that we built and integrated are really technical work, but they were motivated by a desire to let our engineers move faster and be confident even as the system becomes larger and more complex over time. Durability reviews, similarly, are a way to help the team think about durability in a structured way, but also to make sure that we are always holding ourselves accountable for a high bar for durability as a team. There are many other examples of how we treat the organization as part of the system, and it’s been interesting to see how once you make this shift, you experiment and innovate with how the team builds and operates just as much as you do with what they are building and operating.

Scaling myself: Solving hard problems starts and ends with “Ownership”

The last example of scale that I’d like to tell you about is an individual one. I joined Amazon as an entrepreneur and a university professor. I’d had tens of grad students and built an engineering team of about 150 people at Coho. In the roles I’d had in the university and in startups, I loved having the opportunity to be technically creative, to build really cool systems and incredible teams, and to always be learning. But I’d never had to do that kind of role at the scale of software, people, or business that I suddenly faced at Amazon.

One of my favourite parts of being a CS professor was teaching the systems seminar course to graduate students. This was a course where we’d read and generally have pretty lively discussions about a collection of “classic” systems research papers. One of my favourite parts of teaching that course was that about half way through it we’d read the SOSP Dynamo paper. I looked forward to a lot of the papers that we read in the course, but I really looked forward to the class where we read the Dynamo paper, because it was from a real production system that the students could relate to. It was Amazon, and there was a shopping cart, and that was what Dynamo was for. It’s always fun to talk about research work when people can map it to real things in their own experience.

But also, technically, it was fun to discuss Dynamo, because Dynamo was eventually consistent, so it was possible for your shopping cart to be wrong.

I loved this, because it was where we’d discuss what you do, practically, in production, when Dynamo was wrong. When a customer was able to place an order only to later realize that the last item had already been sold. You detected the conflict but what could you do? The customer was expecting a delivery.

This example may have stretched the Dynamo paper’s story a little bit, but it drove to a great punchline. Because the students would often spend a bunch of discussion trying to come up with technical software solutions. Then someone would point out that this wasn’t it at all. That ultimately, these conflicts were rare, and you could resolve them by getting support staff involved and making a human decision. It was a moment where, if it worked well, you could take the class from being critical and engaged in thinking about tradeoffs and design of software systems, and you could get them to realize that the system might be bigger than that. It might be a whole organization, or a business, and maybe some of the same thinking still applied.

Now that I’ve worked at Amazon for a while, I’ve come to realize that my interpretation wasn’t all that far from the truth – in terms of how the services that we run are hardly “just” the software. I’ve also realized that there’s a bit more to it than what I’d gotten out of the paper when teaching it. Amazon spends a lot of time really focused on the idea of “ownership.” The term comes up in a lot of conversations – like “does this action item have an owner?” – meaning who is the single person that is on the hook to really drive this thing to completion and make it successful.

The focus on ownership actually helps understand a lot of the organizational structure and engineering approaches that exist within Amazon, and especially in S3. To move fast, to keep a really high bar for quality, teams need to be owners. They need to own the API contracts with other systems their service interacts with, they need to be completely on the hook for durability and performance and availability, and ultimately, they need to step in and fix stuff at three in the morning when an unexpected bug hurts availability. But they also need to be empowered to reflect on that bug fix and improve the system so that it doesn’t happen again. Ownership carries a lot of responsibility, but it also carries a lot of trust – because to let an individual or a team own a service, you have to give them the leeway to make their own decisions about how they are going to deliver it. It’s been a great lesson for me to realize how much allowing individuals and teams to directly own software, and more generally own a portion of the business, allows them to be passionate about what they do and really push on it. It’s also remarkable how much getting ownership wrong can have the opposite result.

Encouraging ownership in others

I’ve spent a lot of time at Amazon thinking about how important and effective the focus on ownership is to the business, but also about how effective an individual tool it is when I work with engineers and teams. I realized that the idea of recognizing and encouraging ownership had actually been a really effective tool for me in other roles. Here’s an example: In my early days as a professor at UBC, I was working with my first set of graduate students and trying to figure out how to choose great research problems for my lab. I vividly remember a conversation I had with a colleague that was also a pretty new professor at another school. When I asked them how they choose research problems with their students, they flipped. They had a surprisingly frustrated reaction. “I can’t figure this out at all. I have like 5 projects I want students to do. I’ve written them up. They hum and haw and pick one up but it never works out. I could do the projects faster myself than I can teach them to do it.”

And ultimately, that’s actually what this person did – they were amazing, they did a bunch of really cool stuff, and wrote some great papers, and then went and joined a company and did even more cool stuff. But when I talked to grad students that worked with them what I heard was, “I just couldn’t get invested in that thing. It wasn’t my idea.”

As a professor, that was a pivotal moment for me. From that point forward, when I worked with students, I tried really hard to ask questions, and listen, and be excited and enthusiastic. But ultimately, my most successful research projects were never mine. They were my students and I was lucky to be involved. The thing that I don’t think I really internalized until much later, working with teams at Amazon, was that one big contribution to those projects being successful was that the students really did own them. Once students really felt like they were working on their own ideas, and that they could personally evolve it and drive it to a new result or insight, it was never difficult to get them to really invest in the work and the thinking to develop and deliver it. They just had to own it.

And this is probably one area of my role at Amazon that I’ve thought about and tried to develop and be more intentional about than anything else I do. As a really senior engineer in the company, of course I have strong opinions and I absolutely have a technical agenda. But If I interact with engineers by just trying to dispense ideas, it’s really hard for any of us to be successful. It’s a lot harder to get invested in an idea that you don’t own. So, when I work with teams, I’ve kind of taken the strategy that my best ideas are the ones that other people have instead of me. I consciously spend a lot more time trying to develop problems, and to do a really good job of articulating them, rather than trying to pitch solutions. There are often multiple ways to solve a problem, and picking the right one is letting someone own the solution. And I spend a lot of time being enthusiastic about how those solutions are developing (which is pretty easy) and encouraging folks to figure out how to have urgency and go faster (which is often a little more complex). But it has, very sincerely, been one of the most rewarding parts of my role at Amazon to approach scaling myself as an engineer being measured by making other engineers and teams successful, helping them own problems, and celebrating the wins that they achieve.

Closing thought

I came to Amazon expecting to work on a really big and complex piece of storage software. What I learned was that every aspect of my role was unbelievably bigger than that expectation. I’ve learned that the technical scale of the system is so enormous, that its workload, structure, and operations are not just bigger, but foundationally different from the smaller systems that I’d worked on in the past. I learned that it wasn’t enough to think about the software, that “the system” was also the software’s operation as a service, the organization that ran it, and the customer code that worked with it. I learned that the organization itself, as part of the system, had its own scaling challenges and provided just as many problems to solve and opportunities to innovate. And finally, I learned that to really be successful in my own role, I needed to focus on articulating the problems and not the solutions, and to find ways to support strong engineering teams in really owning those solutions.

I’m hardly done figuring any of this stuff out, but I sure feel like I’ve learned a bunch so far. Thanks for taking the time to listen.

This article originally appeared on All Things Distributed.

I also gave a keynote at USENIX FAST ‘23 that covers much of the same material in talk form: